[Signify HoF]

During my development of the Hue Edge application, I discovered a security vulnerability relating to authentication at the Signify API endpoint.

I informed the company of a way to bypass the authentication and it was fixed shortly after.

As a result my name was added to the Hall of Fame amongst other members of the security community, researchers and ethical hackers.

[Hue Edge]

Android (24-30) Slook SDK application for control of Philips Hue Lights via Edge panel (Cocktail panel Edge Single Plus Mode). Written in Java.

Features:

- Toggle lights, rooms, groups, zones and apply scenes via the edge panel

- Works even from the lock screen

- Add and remove buttons to your liking

- Apply custom icons to the panel buttons

- Press and hold for control of the brightness, color, saturation, and temperature

- Pull-down to refresh and see the status at a glance

- Guided setup helps you discover and connect to your Philips Hue Bridge

- Separate categories for different types of actions

- Currently limited to control of the Philips Hue Bridge on the same local network

[CanTracker]

An Android (SDK 21-30) app written in Kotlin by me as a hobby project.

Organizes a collection of cans, allowing to scan barcodes and add the can with details to the collection. Most details are extracted from the barcode, and some are found with a custom Google search CSE

This app does make use of Retrofit to make requests to the search engine and Moshi to handle the deserialization of the returned JSON to Kotlin data objects.

The app also leverages ViewModel, LiveData, Data Binding with binding adapters, and Navigation with the SafeArgs plugin for parameter passing between fragments. Accessibility is implemented in form of custom content descriptors for all views. The Project uses Material Design with Navigation Transitions for a consistent and modern experience.

[Penumbra]

A robotic cat creature. Arduino Platform. Made of wood sticks, glue, 12 servos, and sonar radar. Controlled first via mobile phone, later via Xbox Wireless gamepad.

The simulation was created using Unity and ml-agents named Antumbra AI

Creating my own communication protocol and software, made it possible to control via keyboard or gamepad and see the robot move both on-screen and in real life.

[Controlling a Collaborative Robot Using Hand Gestures

(Bachelor Thesis)]

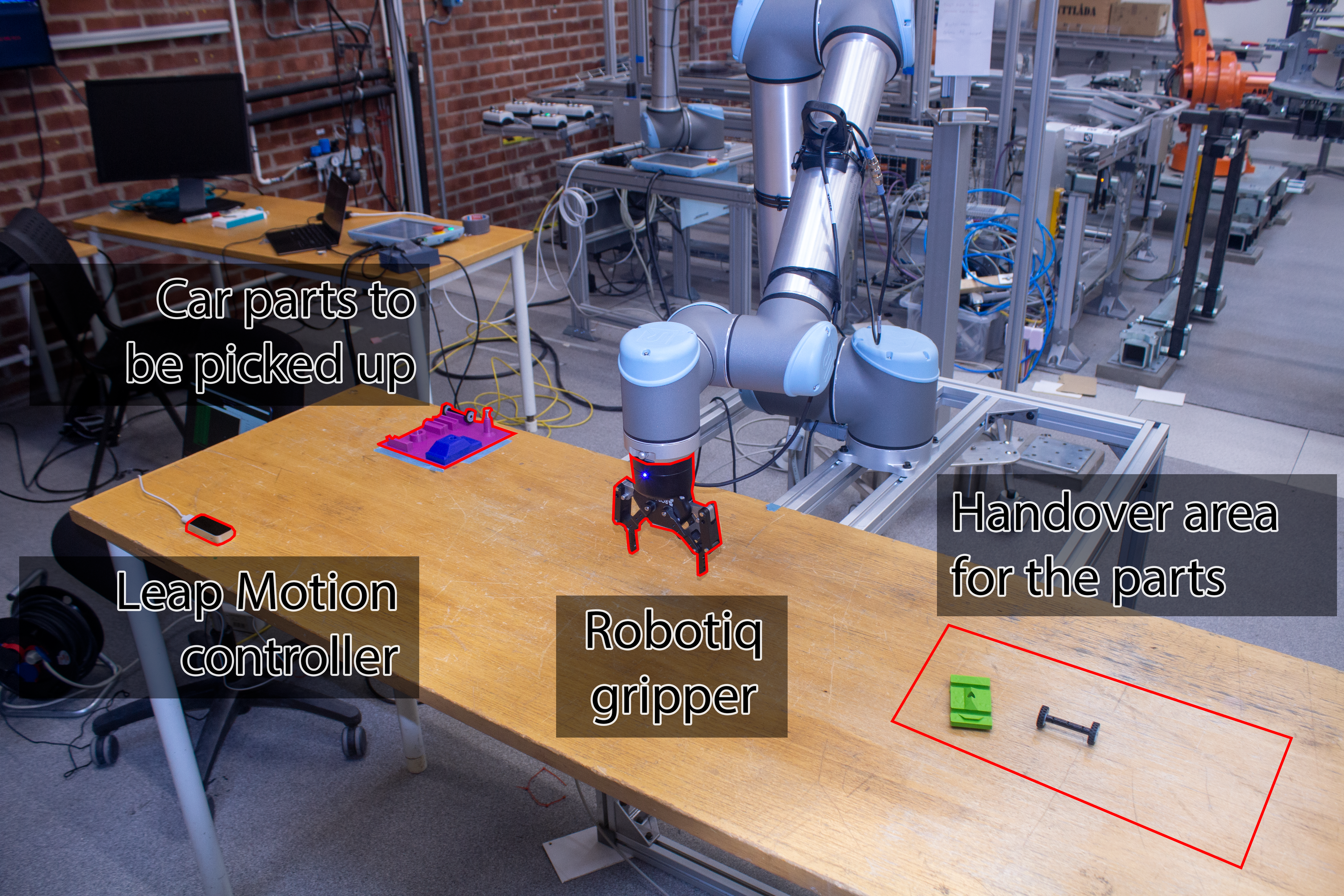

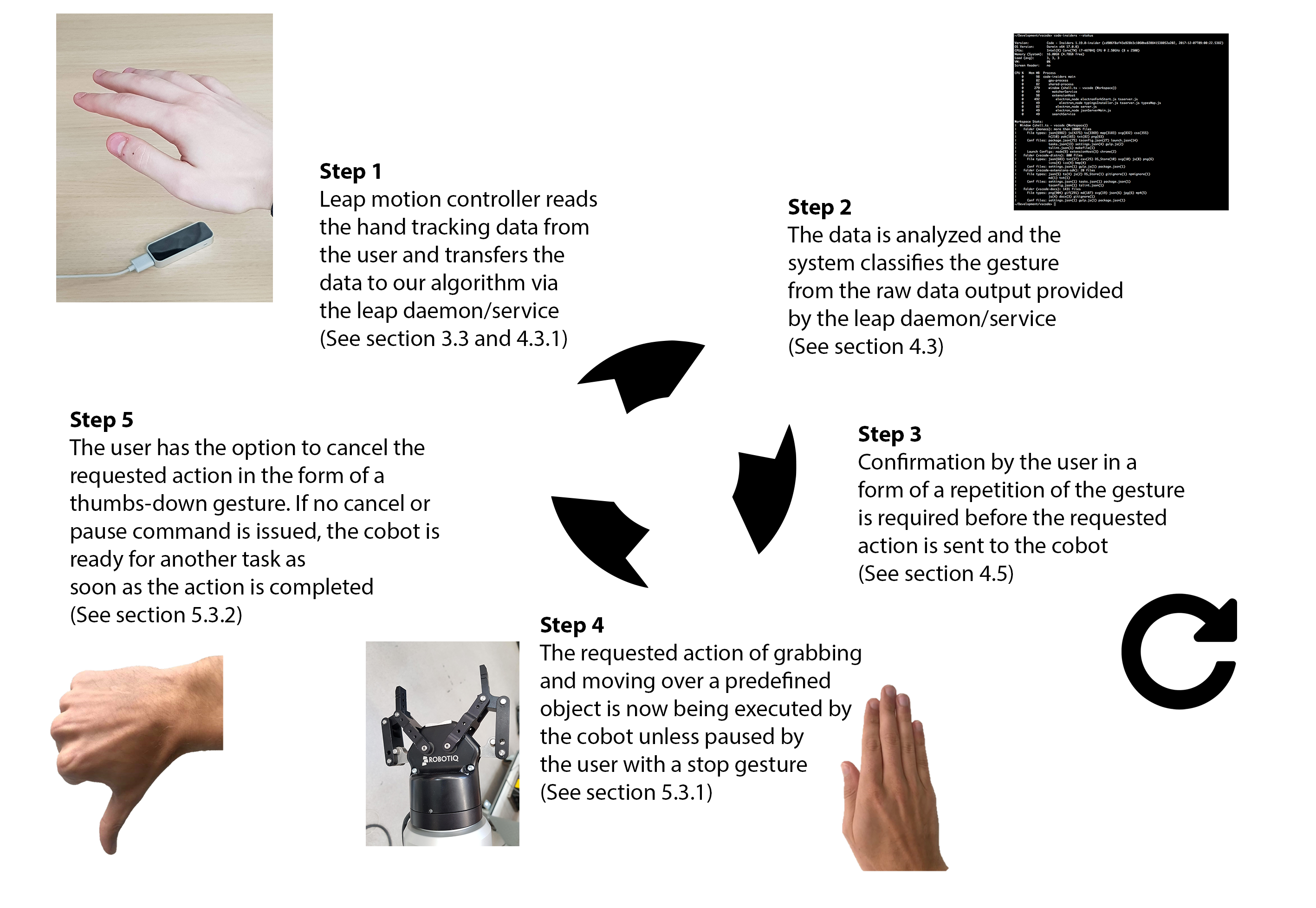

Working in a team of five we have created software for a COBOT to be controlled with hand gestures via Leap Motion Sensor.

The team was amazing and everyone contributed tremendously to different areas of the project.

My role was a kind of team leader, although in practice it meant that I did varying tasks depending on where I could bring most value at a specific moment.

The result was fantastic, and we have received the highest possible grade with very positive feedback from our supervisors.